# Image-Text Retrieval Embdding with BLIP

*author: David Wang*

## Description

This operator extracts features for image or text with [BLIP](https://arxiv.org/abs/2201.12086) which can generate embeddings for text and image by jointly training an image encoder and text encoder to maximize the cosine similarity. This is a adaptation from [salesforce/BLIP](https://github.com/salesforce/BLIP).

## Code Example

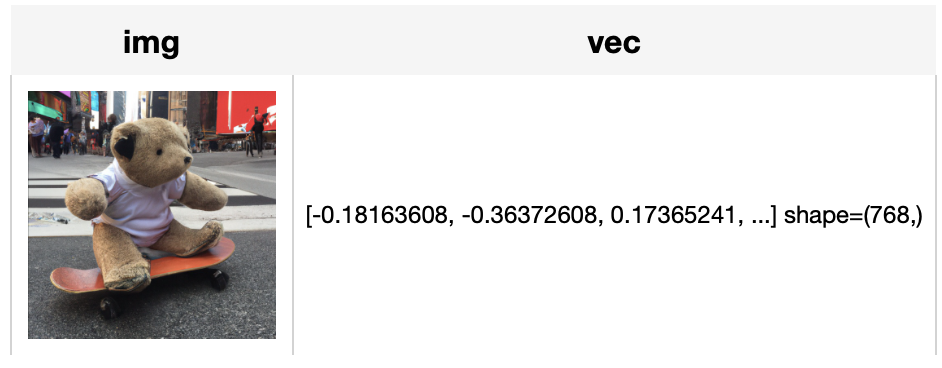

Load an image from path './teddy.jpg' to generate an image embedding.

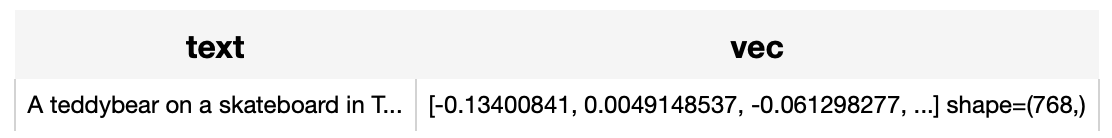

Read the text 'A teddybear on a skateboard in Times Square.' to generate an text embedding.

*Write a pipeline with explicit inputs/outputs name specifications:*

```python

from towhee.dc2 import pipe, ops, DataCollection

img_pipe = (

pipe.input('url')

.map('url', 'img', ops.image_decode.cv2_rgb())

.map('img', 'vec', ops.image_text_embedding.blip(model_name='blip_itm_base_coco', modality='image'))

.output('img', 'vec')

)

text_pipe = (

pipe.input('text')

.map('text', 'vec', ops.image_text_embedding.blip(model_name='blip_itm_base_coco', modality='text'))

.output('text', 'vec')

)

DataCollection(img_pipe('./teddy.jpg')).show()

DataCollection(text_pipe('A teddybear on a skateboard in Times Square.')).show()

```

## Factory Constructor

Create the operator via the following factory method

***blip(model_name, modality)***

**Parameters:**

***model_name:*** *str*

The model name of BLIP. Supported model names:

- blip_itm_base_coco

- blip_itm_large_coco

- blip_itm_base_flickr

- blip_itm_large_flickr

***modality:*** *str*

Which modality(*image* or *text*) is used to generate the embedding.

## Interface

An image-text embedding operator takes a [towhee image](link/to/towhee/image/api/doc) or string as input and generate an embedding in ndarray.

***save_model(format='pytorch', path='default')***

Save model to local with specified format.

**Parameters:**

***format***: *str*

The format of saved model, defaults to 'pytorch'.

***path***: *str*

The path where model is saved to. By default, it will save model to the operator directory.

```python

from towhee import ops

op = ops.image_text_embedding.blip(model_name='blip_itm_base_coco', modality='image').get_op()

op.save_model('onnx', 'test.onnx')

```

**Parameters:**

***data:*** *towhee.types.Image (a sub-class of numpy.ndarray)* or *str*

The data (image or text based on specified modality) to generate embedding.

**Parameters:**

***data:*** *towhee.types.Image (a sub-class of numpy.ndarray)* or *str*

The data (image or text based on specified modality) to generate embedding.

**Returns:** *numpy.ndarray*

The data embedding extracted by model.