# Image-Text Retrieval Embdding with CLIP

*author: David Wang*

## Description

This operator extracts features for image or text with [CLIP](https://arxiv.org/abs/2103.00020) which can generate embeddings for text and image by jointly training an image encoder and text encoder to maximize the cosine similarity.

## Code Example

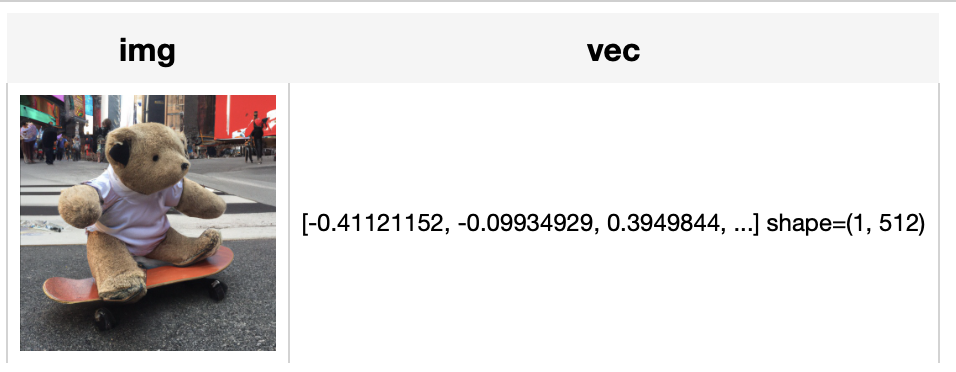

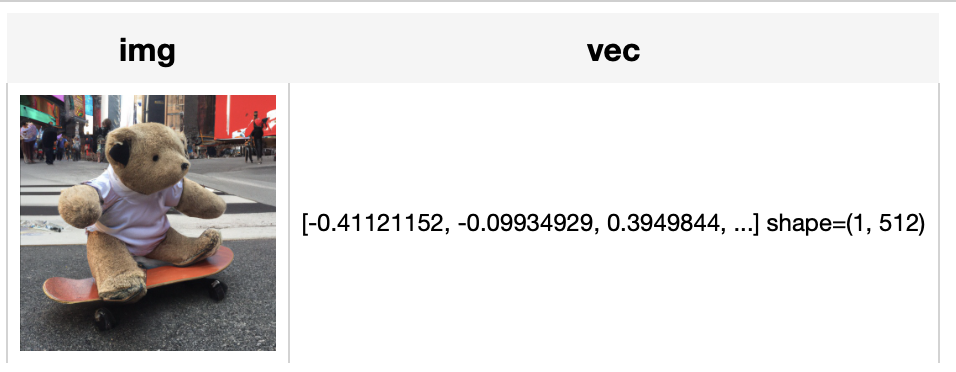

Load an image from path './teddy.jpg' to generate an image embedding.

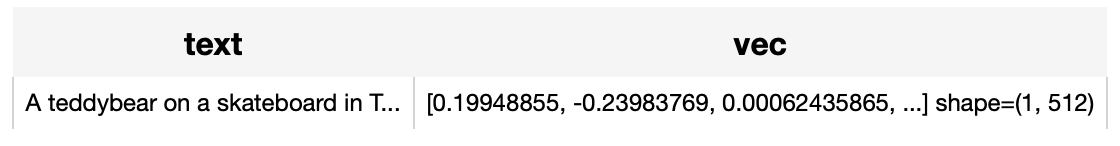

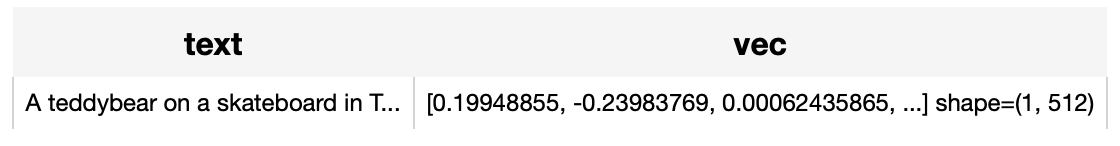

Read the text 'A teddybear on a skateboard in Times Square.' to generate an text embedding.

*Write a pipeline with explicit inputs/outputs name specifications:*

```python

from towhee import pipe, ops, DataCollection

img_pipe = (

pipe.input('url')

.map('url', 'img', ops.image_decode.cv2_rgb())

.map('img', 'vec', ops.image_text_embedding.clip(model_name='clip_vit_base_patch16', modality='image'))

.output('img', 'vec')

)

text_pipe = (

pipe.input('text')

.map('text', 'vec', ops.image_text_embedding.clip(model_name='clip_vit_base_patch16', modality='text'))

.output('text', 'vec')

)

DataCollection(img_pipe('./teddy.jpg')).show()

DataCollection(text_pipe('A teddybear on a skateboard in Times Square.')).show()

```

## Factory Constructor

Create the operator via the following factory method

***clip(model_name, modality)***

**Parameters:**

***model_name:*** *str*

The model name of CLIP. Supported model names:

- clip_vit_base_patch16

- clip_vit_base_patch32

- clip_vit_large_patch14

- clip_vit_large_patch14_336

***modality:*** *str*

Which modality(*image* or *text*) is used to generate the embedding.

***checkpoint_path***: *str*

The path to local checkpoint, defaults to None.

If None, the operator will download and load pretrained model by `model_name` from Huggingface transformers.

## Interface

An image-text embedding operator takes a [towhee image](link/to/towhee/image/api/doc) or string as input and generate an embedding in ndarray.

***save_model(format='pytorch', path='default')***

Save model to local with specified format.

**Parameters:**

***format***: *str*

The format of saved model, defaults to 'pytorch'.

***path***: *str*

The path where model is saved to. By default, it will save model to the operator directory.

```python

from towhee import ops

op = ops.image_text_embedding.clip(model_name='clip_vit_base_patch16', modality='image').get_op()

op.save_model('onnx', 'test.onnx')

```

**Parameters:**

***data:*** *towhee.types.Image (a sub-class of numpy.ndarray)* or *str*

The data (image or text based on specified modality) to generate embedding.

**Returns:** *numpy.ndarray*

The data embedding extracted by model.

***supported_model_names(format=None)***

Get a list of all supported model names or supported model names for specified model format.

**Parameters:**

***format***: *str*

The model format such as 'pytorch', 'torchscript'.

```python

from towhee import ops

op = ops.image_text_embedding.clip(model_name='clip_vit_base_patch16', modality='image').get_op()

full_list = op.supported_model_names()

onnx_list = op.supported_model_names(format='onnx')

print(f'Onnx-support/Total Models: {len(onnx_list)}/{len(full_list)}')

```

## Fine-tune

### Requirement

If you want to train this operator, besides dependency in requirements.txt, you need install these dependencies.

There is also an [example](https://github.com/towhee-io/examples/blob/main/image/text_image_search/2_deep_dive_text_image_search.ipynb) to show how to finetune it on a custom dataset.

```python

! python -m pip install datasets evaluate

```

### Get start

```python

import towhee

clip_op = towhee.ops.image_text_embedding.clip(model_name='clip_vit_base_patch16', modality='image').get_op()

data_args = {

'dataset_name': 'ydshieh/coco_dataset_script',

'dataset_config_name': '2017',

'max_seq_length': 77,

'data_dir': path_to_your_coco_dataset,

'image_mean': [0.48145466, 0.4578275, 0.40821073],

'image_std': [0.26862954, 0.26130258, 0.27577711]

}

training_args = {

'num_train_epochs': 3, # you can add epoch number to get a better metric.

'per_device_train_batch_size': 8,

'per_device_eval_batch_size': 8,

'do_train': True,

'do_eval': True,

'remove_unused_columns': False,

'output_dir': './tmp/test-clip',

'overwrite_output_dir': True,

}

model_args = {

'freeze_vision_model': False,

'freeze_text_model': False,

'cache_dir': './cache'

}

clip_op.train(data_args=data_args, training_args=training_args, model_args=model_args)

```

### Dive deep and customize your training

You can change the [training script](https://towhee.io/image-text-embedding/clip/src/branch/main/train_clip_with_hf_trainer.py) in your customer way.

Or your can refer to the original [hugging face transformers training examples](https://github.com/huggingface/transformers/tree/main/examples/pytorch/contrastive-image-text).