# Chinese Image-Text Retrieval Embdding with Taiyi

*author: David Wang*

## Description

This operator extracts features for image or text(in Chinese) with [Taiyi(太乙)](https://arxiv.org/abs/2209.02970) which can generate embeddings for text and image by jointly training an image encoder and text encoder to maximize the cosine similarity. This method is developed by [IDEA-CCNL](https://github.com/IDEA-CCNL/Fengshenbang-LM/).

## Code Example

Load an image from path './teddy.jpg' to generate an image embedding.

Read the text 'A teddybear on a skateboard in Times Square.' to generate an text embedding.

*Write a pipeline with explicit inputs/outputs name specifications:*

```python

from towhee import pipe, ops, DataCollection

img_pipe = (

pipe.input('url')

.map('url', 'img', ops.image_decode.cv2_rgb())

.map('img', 'vec', ops.image_text_embedding.taiyi(model_name='taiyi-clip-roberta-102m-chinese', modality='image'))

.output('img', 'vec')

)

text_pipe = (

pipe.input('text')

.map('text', 'vec', ops.image_text_embedding.taiyi(model_name='taiyi-clip-roberta-102m-chinese', modality='text'))

.output('text', 'vec')

)

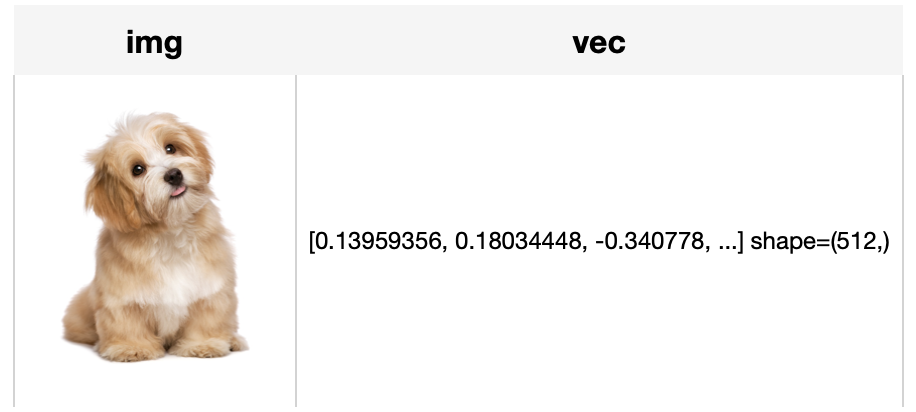

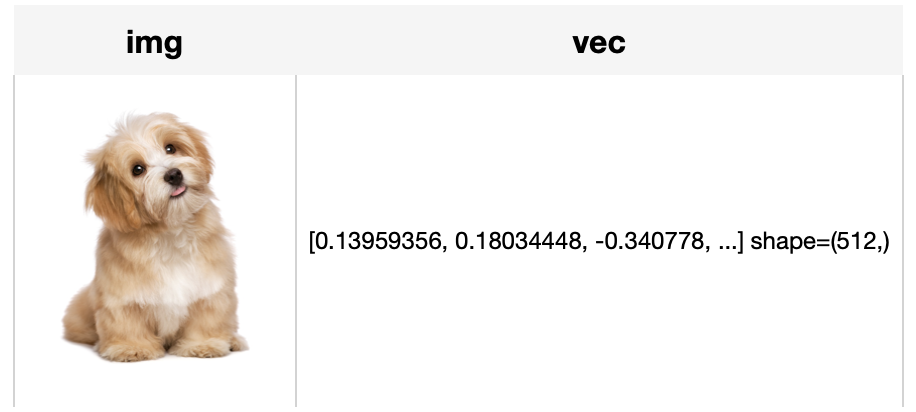

DataCollection(img_pipe('./dog.jpg')).show()

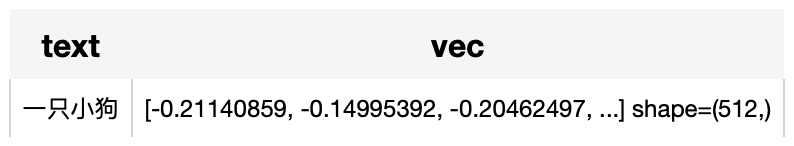

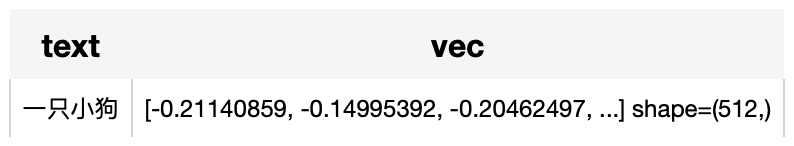

DataCollection(text_pipe('一只小狗')).show()

```

## Factory Constructor

Create the operator via the following factory method

***taiyi(model_name, modality)***

**Parameters:**

***model_name:*** *str*

The model name of Taiyi. Supported model names:

- taiyi-clip-roberta-102m-chinese

- taiyi-clip-roberta-large-326m-chinese

***modality:*** *str*

Which modality(*image* or *text*) is used to generate the embedding.

***clip_checkpoint_path:*** *str*

The weight path to load for the clip branch.

***text_checkpoint_path:*** *str*

The weight path to load for the text branch.

***devcice:*** *str*

The device in string, defaults to None. If None, it will enable "cuda" automatically when cuda is available.

## Interface

An image-text embedding operator takes a [towhee image](link/to/towhee/image/api/doc) or string as input and generate an embedding in ndarray.

**Parameters:**

***data:*** *towhee.types.Image (a sub-class of numpy.ndarray)* or *str*

The data (image or text based on specified modality) to generate embedding.

**Returns:** *numpy.ndarray*

The data embedding extracted by model.

# More Resources

- [The guide to instructor-large | HKU NLP](https://zilliz.com/ai-models/instructor-large): instructor-large: an instruction-finetuned model tailored for text embeddings; better performance than `instructor-base`, but worse than `instructor-xl`.

- [Supercharged Semantic Similarity Search in Production - Zilliz blog](https://zilliz.com/learn/supercharged-semantic-similarity-search-in-production): Building a Blazing Fast, Highly Scalable Text-to-Image Search with CLIP embeddings and Milvus, the most advanced open-source vector database.

- [The guide to all-MiniLM-L12-v2 | Hugging Face](https://zilliz.com/ai-models/all-MiniLM-L12-v2): all-MiniLM-L12-v2: a text embedding model ideal for semantic search and RAG and fine-tuned based on Microsoft/MiniLM-L12-H384-uncased

- [The guide to bge-base-zh-v1.5 | BAAI](https://zilliz.com/ai-models/bge-base-zh-v1.5): bge-base-zh-v1.5: A general embedding (BGE) model introduced by BAAI and tailored for Chinese text.

- [Sparse and Dense Embeddings: A Guide for Effective Information Retrieval with Milvus | Zilliz Webinar](https://zilliz.com/event/sparse-and-dense-embeddings-webinar): Zilliz webinar covering what sparse and dense embeddings are and when you'd want to use one over the other.

- [Image Embeddings for Enhanced Image Search - Zilliz blog](https://zilliz.com/learn/image-embeddings-for-enhanced-image-search): Image Embeddings are the core of modern computer vision algorithms. Understand their implementation and use cases and explore different image embedding models.

- [Sparse and Dense Embeddings: A Guide for Effective Information Retrieval with Milvus | Zilliz Webinar](https://zilliz.com/event/sparse-and-dense-embeddings-webinar/success): Zilliz webinar covering what sparse and dense embeddings are and when you'd want to use one over the other.

- [Training Text Embeddings with Jina AI - Zilliz blog](https://zilliz.com/blog/training-text-embeddings-with-jina-ai): In a recent talk by Bo Wang, he discussed the creation of Jina text embeddings for modern vector search and RAG systems. He also shared methodologies for training embedding models that effectively encode extensive information, along with guidance o