# Animating using AnimeGanV2

*author: Filip Haltmayer*

## Description

Convert an image into an animated image using [`AnimeganV2`](https://github.com/TachibanaYoshino/AnimeGANv2).

## Code Example

Load an image from path './test.png'.

*Write the pipeline in simplified style*:

```python

import towhee

towhee.glob('./test.png') \

.image_decode() \

.img2img_translation.animegan(model_name = 'hayao') \

.show()

```

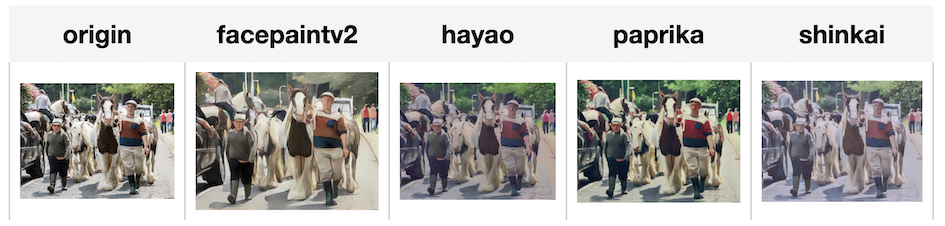

*Write a pipeline with explicit inputs/outputs name specifications:*

```python

import towhee

towhee.glob['path']('./test.png') \

.image_decode['path', 'origin']() \

.img2img_translation.animegan['origin', 'facepaintv2'](model_name = 'facepaintv2') \

.img2img_translation.animegan['origin', 'hayao'](model_name = 'hayao') \

.img2img_translation.animegan['origin', 'paprika'](model_name = 'paprika') \

.img2img_translation.animegan['origin', 'shinkai'](model_name = 'shinkai') \

.select['origin', 'facepaintv2', 'hayao', 'paprika', 'shinkai']() \

.show()

```

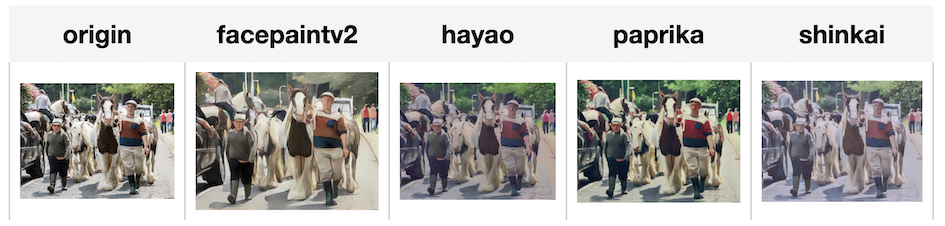

*Write a pipeline with explicit inputs/outputs name specifications:*

```python

import towhee

towhee.glob['path']('./test.png') \

.image_decode['path', 'origin']() \

.img2img_translation.animegan['origin', 'facepaintv2'](model_name = 'facepaintv2') \

.img2img_translation.animegan['origin', 'hayao'](model_name = 'hayao') \

.img2img_translation.animegan['origin', 'paprika'](model_name = 'paprika') \

.img2img_translation.animegan['origin', 'shinkai'](model_name = 'shinkai') \

.select['origin', 'facepaintv2', 'hayao', 'paprika', 'shinkai']() \

.show()

```

## Factory Constructor

Create the operator via the following factory method

***img2img_translation.animegan(model_name = 'which anime model to use')***

Model options:

- celeba

- facepaintv1

- facepaintv2

- hayao

- paprika

- shinkai

## Interface

Takes in a numpy rgb image in channels first. It transforms input into animated image in numpy form.

**Parameters:**

***model_name***: *str*

Which model to use for transfer.

***framework***: *str*

Which ML framework being used, for now only supports PyTorch.

**Returns**: *towhee.types.Image (a sub-class of dumpy.ndarray)*

The new image.

## Reference

Jie Chen, Gang Liu, Xin Chen

"AnimeGAN: A Novel Lightweight GAN for Photo Animation."

ISICA 2019: Artificial Intelligence Algorithms and Applications pp 242-256, 2019.

*Write a pipeline with explicit inputs/outputs name specifications:*

```python

import towhee

towhee.glob['path']('./test.png') \

.image_decode['path', 'origin']() \

.img2img_translation.animegan['origin', 'facepaintv2'](model_name = 'facepaintv2') \

.img2img_translation.animegan['origin', 'hayao'](model_name = 'hayao') \

.img2img_translation.animegan['origin', 'paprika'](model_name = 'paprika') \

.img2img_translation.animegan['origin', 'shinkai'](model_name = 'shinkai') \

.select['origin', 'facepaintv2', 'hayao', 'paprika', 'shinkai']() \

.show()

```

*Write a pipeline with explicit inputs/outputs name specifications:*

```python

import towhee

towhee.glob['path']('./test.png') \

.image_decode['path', 'origin']() \

.img2img_translation.animegan['origin', 'facepaintv2'](model_name = 'facepaintv2') \

.img2img_translation.animegan['origin', 'hayao'](model_name = 'hayao') \

.img2img_translation.animegan['origin', 'paprika'](model_name = 'paprika') \

.img2img_translation.animegan['origin', 'shinkai'](model_name = 'shinkai') \

.select['origin', 'facepaintv2', 'hayao', 'paprika', 'shinkai']() \

.show()

```