# Video-Text Retrieval Embedding with Collaborative Experts

*author: Chen Zhang*

## Description

This operator extracts features for video or text with [Use What You Have: Video Retrieval Using Representations From Collaborative Experts](https://arxiv.org/pdf/1907.13487v2.pdf).

For video encoder, this operator exploits embeddings with different modality information extracted from pre-trained experts models, such as motion, appearance, scene, ASR or OCR.

For text query encoder, it exploits text embeddings extracted from pre-trained models such as word2vec or GPT.

This operator is a collaborative experts model, which aggregates information from these different pre-trained expert models, and output the video embeddings and text embeddings.

## Code Example

For video input, load embeddings extracted from different upstream expert networks, such as audio, face, action, RGB, OCR and so on. They can be from upstream operators or from disk .

For text query input, load text embeddings extracted from pre-trained models. They can be from upstream operators or from disk .

For `ind` input, if a data in one of the modalities is invalid(such as NaN) or you do not want use it, the corresponding value in `ind` is 0, else 1.

```python

from towhee import pipe, ops, DataCollection

import torch

torch.manual_seed(42)

batch_size = 8

device = 'cuda' if torch.cuda.is_available() else 'cpu'

experts = {"audio": torch.rand(batch_size, 29, 128).to(device),

"face": torch.rand(batch_size, 512).to(device),

"i3d.i3d.0": torch.rand(batch_size, 1024).to(device),

"imagenet.resnext101_32x48d.0": torch.rand(batch_size, 2048).to(device),

"imagenet.senet154.0": torch.rand(batch_size, 2048).to(device),

"ocr": torch.rand(batch_size, 49, 300).to(device),

"r2p1d.r2p1d-ig65m.0": torch.rand(batch_size, 512).to(device),

"scene.densenet161.0": torch.rand(batch_size, 2208).to(device),

"speech": torch.rand(batch_size, 32, 300).to(device)

}

ind = {

"r2p1d.r2p1d-ig65m.0": torch.ones(batch_size,).to(device),

"imagenet.senet154.0": torch.ones(batch_size,).to(device),

"imagenet.resnext101_32x48d.0": torch.ones(batch_size,).to(device),

"scene.densenet161.0": torch.ones(batch_size,).to(device),

"audio": torch.ones(batch_size,).to(device),

"speech": torch.ones(batch_size,).to(device),

"ocr": torch.randint(low=0, high=2, size=(batch_size,)).to(device),

"face": torch.randint(low=0, high=2, size=(batch_size,)).to(device),

"i3d.i3d.0": torch.ones(batch_size,).to(device),

}

text = torch.randn(batch_size, 1, 37, 768).to(device)

p = (

pipe.input('experts', 'ind', 'text') \

.map(('experts', 'ind', 'text'),('text_embds', 'vid_embds') , ops.video_text_embedding.collaborative_experts(device=device)) \

.output('experts', 'ind', 'text', 'text_embds', 'vid_embds')

)

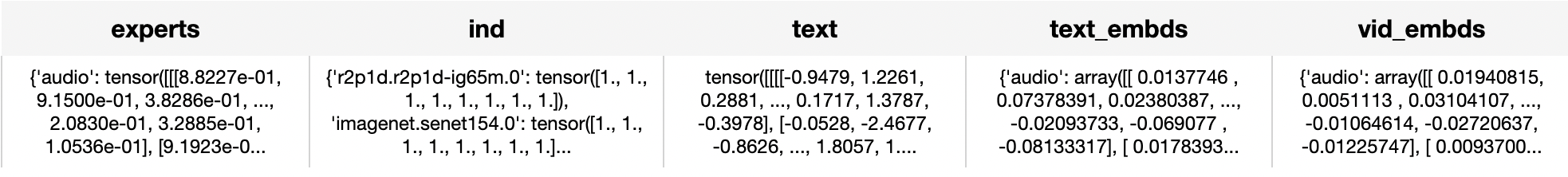

DataCollection(p(experts, ind, text)).show()

```

## Factory Constructor

Create the operator via the following factory method

***collaborative_experts(config: Dict = None, weights_path: str = None, device: str = None)***

**Parameters:**

***config:*** *Optional[dict]*

Default is None, if None, use the [default config](https://github.com/towhee-io/towhee/blob/a713ea2deaa0273f0b6af28354a36572e8eba604/towhee/models/collaborative_experts/collaborative_experts.py#L1130) the same as these in the original paper and repo,

***weights_path:*** *Optional[str]*

Pretrained model weights path, if None, use the weights in this operator.

***device:*** *Optional[str]*

cpu or cuda.

## Interface

When video modality, load a video embeddings extracted from different upstream expert networks, such as video, RGB, audio.

When text modality, read the text to generate a text embedding.

**Parameters:**

***experts:*** *dict*

Embeddings extracted from different upstream expert networks, such as audio, face, action, RGB, OCR and so on. They can be from upstream operators or from disk .

***ind:*** *dict*

If a data in one of the modalities is invalid(such as NaN) or you do not want use it, the corresponding value in `ind` is 0, else 1.

***text:*** *Tensor*

Text embeddings extracted from pre-trained models. They can be from upstream operators or from disk .

**Returns:** *numpy.ndarray*

Text embeddings and video embeddings. They are both a dict with different modality, the key is the same as input modality, and the value is a tensor with shape (batch size, 768).

# More Resources

- [Build Better Multimodal RAG Pipelines with FiftyOne, LlamaIndex, and Milvus - Zilliz blog](https://zilliz.com/blog/build-better-multimodal-rag-pipelines-with-fiftyone-llamaindex-and-milvus): Enhance the capabilities of multimodal systems by efficiently leveraging text and visual data for improved data retrieval and context-rich responses.

- [What is BERT (Bidirectional Encoder Representations from Transformers)? - Zilliz blog](https://zilliz.com/learn/what-is-bert): Learn what Bidirectional Encoder Representations from Transformers (BERT) is and how it uses pre-training and fine-tuning to achieve its remarkable performance.

- [The guide to mistral-embed | Mistral AI](https://zilliz.com/ai-models/mistral-embed): mistral-embed: a specialized embedding model for text data with a context window of 8,000 tokens. Optimized for similarity retrieval and RAG applications.

- [Supercharged Semantic Similarity Search in Production - Zilliz blog](https://zilliz.com/learn/supercharged-semantic-similarity-search-in-production): Building a Blazing Fast, Highly Scalable Text-to-Image Search with CLIP embeddings and Milvus, the most advanced open-source vector database.

- [Sparse and Dense Embeddings: A Guide for Effective Information Retrieval with Milvus | Zilliz Webinar](https://zilliz.com/event/sparse-and-dense-embeddings-webinar/success): Zilliz webinar covering what sparse and dense embeddings are and when you'd want to use one over the other.

- [The guide to all-MiniLM-L12-v2 | Hugging Face](https://zilliz.com/ai-models/all-MiniLM-L12-v2): all-MiniLM-L12-v2: a text embedding model ideal for semantic search and RAG and fine-tuned based on Microsoft/MiniLM-L12-H384-uncased

- [Sparse and Dense Embeddings: A Guide for Effective Information Retrieval with Milvus | Zilliz Webinar](https://zilliz.com/event/sparse-and-dense-embeddings-webinar): Zilliz webinar covering what sparse and dense embeddings are and when you'd want to use one over the other.

- [Enhancing Information Retrieval with Sparse Embeddings | Zilliz Learn - Zilliz blog](https://zilliz.com/learn/enhancing-information-retrieval-learned-sparse-embeddings): Explore the inner workings, advantages, and practical applications of learned sparse embeddings with the Milvus vector database

- [Training Text Embeddings with Jina AI - Zilliz blog](https://zilliz.com/blog/training-text-embeddings-with-jina-ai): In a recent talk by Bo Wang, he discussed the creation of Jina text embeddings for modern vector search and RAG systems. He also shared methodologies for training embedding models that effectively encode extensive information, along with guidance o