Readme

Files and versions

Updated 3 years ago

video-text-embedding

Video-Text Retrieval Embedding with Collaborative Experts

author: Chen Zhang

Description

This operator extracts features for video or text with Use What You Have: Video Retrieval Using Representations From Collaborative Experts.

For video encoder, this operator exploits embeddings with different modality information extracted from pre-trained experts models, such as motion, appearance, scene, ASR or OCR.

For text query encoder, it exploits text embeddings extracted from pre-trained models such as word2vec or GPT.

This operator is a collaborative experts model, which aggregates information from these different pre-trained expert models, and output the video embeddings and text embeddings.

Code Example

For video input, load embeddings extracted from different upstream expert networks, such as audio, face, action, RGB, OCR and so on. They can be from upstream operators or from disk .

For text query input, load text embeddings extracted from pre-trained models. They can be from upstream operators or from disk .

For ind input, if a data in one of the modalities is invalid(such as NaN) or you do not want use it, the corresponding value in ind is 0, else 1.

from towhee import pipe, ops, DataCollection

import torch

torch.manual_seed(42)

batch_size = 8

device = 'cuda' if torch.cuda.is_available() else 'cpu'

experts = {"audio": torch.rand(batch_size, 29, 128).to(device),

"face": torch.rand(batch_size, 512).to(device),

"i3d.i3d.0": torch.rand(batch_size, 1024).to(device),

"imagenet.resnext101_32x48d.0": torch.rand(batch_size, 2048).to(device),

"imagenet.senet154.0": torch.rand(batch_size, 2048).to(device),

"ocr": torch.rand(batch_size, 49, 300).to(device),

"r2p1d.r2p1d-ig65m.0": torch.rand(batch_size, 512).to(device),

"scene.densenet161.0": torch.rand(batch_size, 2208).to(device),

"speech": torch.rand(batch_size, 32, 300).to(device)

}

ind = {

"r2p1d.r2p1d-ig65m.0": torch.ones(batch_size,).to(device),

"imagenet.senet154.0": torch.ones(batch_size,).to(device),

"imagenet.resnext101_32x48d.0": torch.ones(batch_size,).to(device),

"scene.densenet161.0": torch.ones(batch_size,).to(device),

"audio": torch.ones(batch_size,).to(device),

"speech": torch.ones(batch_size,).to(device),

"ocr": torch.randint(low=0, high=2, size=(batch_size,)).to(device),

"face": torch.randint(low=0, high=2, size=(batch_size,)).to(device),

"i3d.i3d.0": torch.ones(batch_size,).to(device),

}

text = torch.randn(batch_size, 1, 37, 768).to(device)

p = (

pipe.input('experts', 'ind', 'text') \

.map(('experts', 'ind', 'text'),('text_embds', 'vid_embds') , ops.video_text_embedding.collaborative_experts(device=device)) \

.output('experts', 'ind', 'text', 'text_embds', 'vid_embds')

)

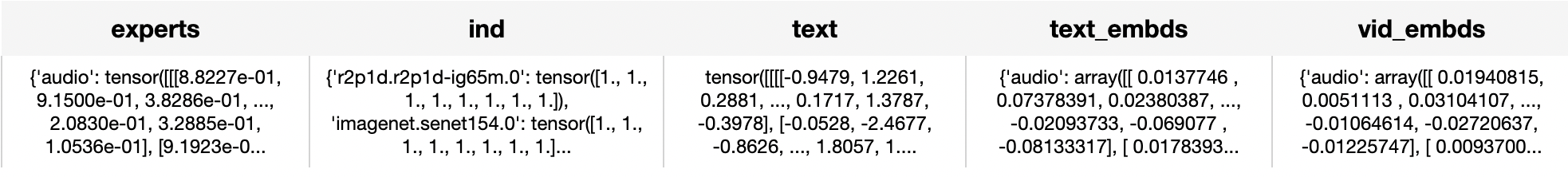

DataCollection(p(experts, ind, text)).show()

Factory Constructor

Create the operator via the following factory method

collaborative_experts(config: Dict = None, weights_path: str = None, device: str = None)

Parameters:

config: Optional[dict]

Default is None, if None, use the default config the same as these in the original paper and repo,

weights_path: Optional[str]

Pretrained model weights path, if None, use the weights in this operator.

device: Optional[str]

cpu or cuda.

Interface

When video modality, load a video embeddings extracted from different upstream expert networks, such as video, RGB, audio.

When text modality, read the text to generate a text embedding.

Parameters:

experts: dict

Embeddings extracted from different upstream expert networks, such as audio, face, action, RGB, OCR and so on. They can be from upstream operators or from disk .

ind: dict

If a data in one of the modalities is invalid(such as NaN) or you do not want use it, the corresponding value in ind is 0, else 1.

text: Tensor

Text embeddings extracted from pre-trained models. They can be from upstream operators or from disk .

Returns: numpy.ndarray

Text embeddings and video embeddings. They are both a dict with different modality, the key is the same as input modality, and the value is a tensor with shape (batch size, 768).

|

| 7 Commits | ||

|---|---|---|---|

.gitattributes

.gitattributes

|

1.2 KiB

|

4 years ago | |

README.md

README.md

|

4.5 KiB

|

3 years ago | |

__init__.py

__init__.py

|

731 B

|

4 years ago | |

collaborative_experts.py

collaborative_experts.py

|

2.2 KiB

|

4 years ago | |

img.png

img.png

|

152 KiB

|

4 years ago | |

model_state_dict.pth

model_state_dict.pth

|

700 MiB

|

4 years ago | |

requirements.txt

requirements.txt

|

26 B

|

4 years ago | |