copied

Readme

Files and versions

Updated 2 years ago

action-classification

Video Classification with Omnivore

Author: Xinyu Ge

Description

A video classification operator generates labels (and corresponding scores) and extracts features for the input video. It transforms the video into frames and loads pre-trained models by model names. This operator has implemented pre-trained models from Omnivore and maps vectors with labels provided by datasets used for pre-training.

Code Example

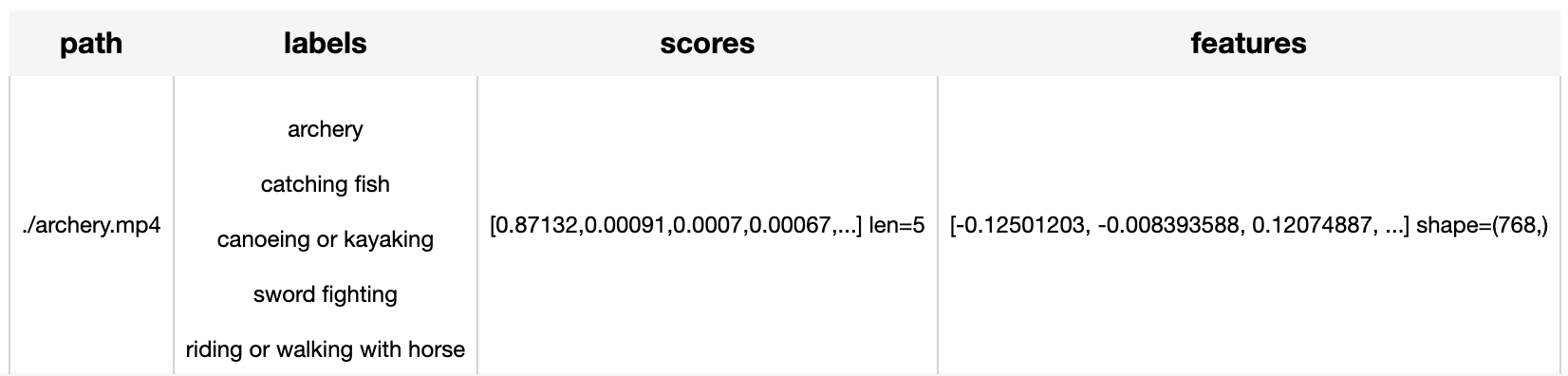

Use the pretrained Omnivore model to classify and generate a vector for the given video path './archery.mp4' (download).

Write a pipeline with explicit inputs/outputs name specifications:

from towhee import pipe, ops, DataCollection

p = (

pipe.input('path')

.map('path', 'frames', ops.video_decode.ffmpeg())

.map('frames', ('labels', 'scores', 'features'),

ops.action_classification.omnivore(model_name='omnivore_swinT'))

.output('path', 'labels', 'scores', 'features')

)

DataCollection(p('./archery.mp4')).show()

Factory Constructor

Create the operator via the following factory method

action_classification.omnivore( model_name='omnivore_swinT', skip_preprocess=False, classmap=None, topk=5)

Parameters:

model_name: str

The name of pre-trained Omnivore model.

Supported model names:

- omnivore_swinT

- omnivore_swinS

- omnivore_swinB

- omnivore_swinB_in21k

- omnivore_swinL_in21k

skip_preprocess: bool

Flag to control whether to skip video transforms, defaults to False. If set to True, the step to transform videos will be skipped. In this case, the user should guarantee that all the input video frames are already reprocessed properly, and thus can be fed to model directly.

classmap: Dict[str: int]:

Dictionary that maps class names to one hot vectors. If not given, the operator will load the default class map dictionary.

topk: int

The topk labels & scores to present in result. The default value is 5.

Interface

A video classification operator generates a list of class labels and a corresponding vector in numpy.ndarray given a video input data.

Parameters:

video: Union[str, numpy.ndarray]

Input video data using local path in string or video frames in ndarray.

Returns: (list, list, torch.Tensor)

A tuple of (labels, scores, features), which contains lists of predicted class names and corresponding scores.

More Resources

- Powering Semantic Search in Computer Vision with Embeddings - Zilliz blog: Discover how to extract useful information from unstructured data sources in a scalable manner using embeddings.

- Vector Database Use Cases: Video Similarity Search - Zilliz: Experience a 10x performance boost and unparalleled precision when your video similarity search system is powered by Zilliz Cloud.

- What is a Convolutional Neural Network? An Engineer's Guide: Convolutional Neural Network is a type of deep neural network that processes images, speeches, and videos. Let's find out more about CNN.

- Building a Video Analysis System with Milvus Vector Database - Zilliz blog: Learn how Milvus powers the AI analysis of video content.

- 4 Steps to Building a Video Search System - Zilliz blog: Searching for videos by image with Milvus

- Everything You Need to Know About Zero Shot Learning - Zilliz blog: A comprehensive guide to Zero-Shot Learning, covering its methodologies, its relations with similarity search, and popular Zero-Shot Classification Models.

- What is a Generative Adversarial Network? An Easy Guide: Just like we classify animal fossils into domains, kingdoms, and phyla, we classify AI networks, too. At the highest level, we classify AI networks as "discriminative" and "generative." A generative neural network is an AI that creates something new. This differs from a discriminative network, which classifies something that already exists into particular buckets. Kind of like we're doing right now, by bucketing generative adversarial networks (GANs) into appropriate classifications. So, if you were in a situation where you wanted to use textual tags to create a new visual image, like with Midjourney, you'd use a generative network. However, if you had a giant pile of data that you needed to classify and tag, you'd use a discriminative model.

|

| 23 Commits | ||

|---|---|---|---|

.gitattributes

.gitattributes

|

1.1 KiB

|

4 years ago | |

README.md

README.md

|

4.8 KiB

|

2 years ago | |

__init__.py

__init__.py

|

680 B

|

4 years ago | |

kinetics_400.json

kinetics_400.json

|

10 KiB

|

4 years ago | |

omnivore.py

omnivore.py

|

4.0 KiB

|

4 years ago | |

requirements.txt

requirements.txt

|

59 B

|

4 years ago | |

result.png

result.png

|

17 KiB

|

3 years ago | |