copied

Readme

Files and versions

Updated 2 years ago

image-text-embedding

Image-Text Retrieval Embdding with BLIP

author: David Wang

Description

This operator extracts features for image or text with BLIP which can generate embeddings for text and image by jointly training an image encoder and text encoder to maximize the cosine similarity. This is a adaptation from salesforce/BLIP.

Code Example

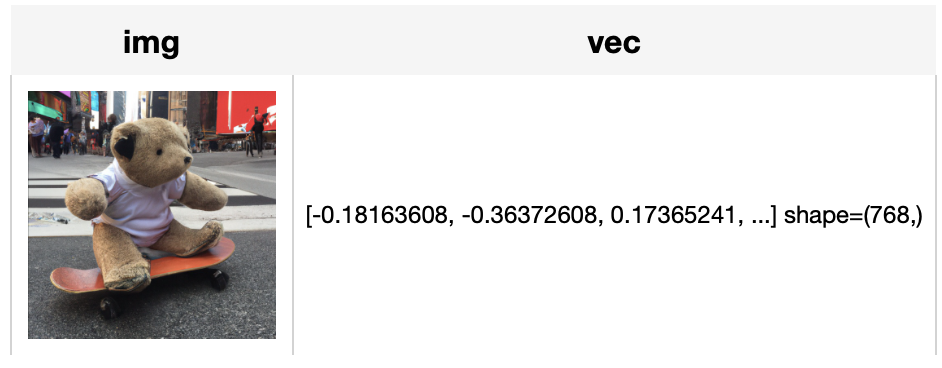

Load an image from path './teddy.jpg' to generate an image embedding.

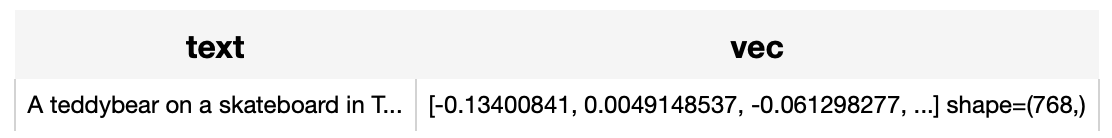

Read the text 'A teddybear on a skateboard in Times Square.' to generate an text embedding.

Write a pipeline with explicit inputs/outputs name specifications:

from towhee import pipe, ops, DataCollection

img_pipe = (

pipe.input('url')

.map('url', 'img', ops.image_decode.cv2_rgb())

.map('img', 'vec', ops.image_text_embedding.blip(model_name='blip_itm_base_coco', modality='image'))

.output('img', 'vec')

)

text_pipe = (

pipe.input('text')

.map('text', 'vec', ops.image_text_embedding.blip(model_name='blip_itm_base_coco', modality='text'))

.output('text', 'vec')

)

DataCollection(img_pipe('./teddy.jpg')).show()

DataCollection(text_pipe('A teddybear on a skateboard in Times Square.')).show()

Factory Constructor

Create the operator via the following factory method

blip(model_name, modality)

Parameters:

model_name: str

The model name of BLIP. Supported model names:

- blip_itm_base_coco

- blip_itm_large_coco

- blip_itm_base_flickr

- blip_itm_large_flickr

modality: str

Which modality(image or text) is used to generate the embedding.

Interface

An image-text embedding operator takes a towhee image or string as input and generate an embedding in ndarray.

save_model(format='pytorch', path='default')

Save model to local with specified format.

Parameters:

format: str

The format of saved model, defaults to 'pytorch'.

path: str

The path where model is saved to. By default, it will save model to the operator directory.

from towhee import ops

op = ops.image_text_embedding.blip(model_name='blip_itm_base_coco', modality='image').get_op()

op.save_model('onnx', 'test.onnx')

Parameters:

data: towhee.types.Image (a sub-class of numpy.ndarray) or str

The data (image or text based on specified modality) to generate embedding.

Parameters:

data: towhee.types.Image (a sub-class of numpy.ndarray) or str

The data (image or text based on specified modality) to generate embedding.

Returns: numpy.ndarray

The data embedding extracted by model.

supported_model_names(format=None)

Get a list of all supported model names or supported model names for specified model format.

Parameters:

format: str

The model format such as 'pytorch', 'torchscript'.

from towhee import ops

op = ops.image_text_embedding.blip(model_name='blip_itm_base_coco', modality='image').get_op()

full_list = op.supported_model_names()

onnx_list = op.supported_model_names(format='onnx')

print(f'Onnx-support/Total Models: {len(onnx_list)}/{len(full_list)}')

Fine-tune

Requirement

If you want to train this operator, besides dependency in requirements.txt, you need install these dependencies. There is also an example to show how to finetune it on a custom dataset.

! python -m pip install datasets

Get start

import towhee

blip_op = towhee.ops.image_text_embedding.blip(model_name='blip_itm_base_coco', modality='image').get_op()

data_args = {

'dataset_name': 'ydshieh/coco_dataset_script',

'dataset_config_name': '2017',

'max_seq_length': 77,

'data_dir': path_to_your_coco_dataset,

'image_mean': [0.48145466, 0.4578275, 0.40821073],

'image_std': [0.26862954, 0.26130258, 0.27577711]

}

training_args = {

'num_train_epochs': 3, # you can add epoch number to get a better metric.

'per_device_train_batch_size': 8,

'per_device_eval_batch_size': 8,

'do_train': True,

'do_eval': True,

'remove_unused_columns': False,

'output_dir': './tmp/test-blip',

'overwrite_output_dir': True,

}

model_args = {

'freeze_vision_model': False,

'freeze_text_model': False,

'cache_dir': './cache'

}

blip_op.train(data_args=data_args, training_args=training_args, model_args=model_args)

Dive deep and customize your training

You can change the training script in your customer way. Or your can refer to the original hugging face transformers training examples.

More Resources

- CLIP Object Detection: Merging AI Vision with Language Understanding - Zilliz blog: CLIP Object Detection combines CLIP's text-image understanding with object detection tasks, allowing CLIP to locate and identify objects in images using texts.

- Supercharged Semantic Similarity Search in Production - Zilliz blog: Building a Blazing Fast, Highly Scalable Text-to-Image Search with CLIP embeddings and Milvus, the most advanced open-source vector database.

- Hybrid Search: Combining Text and Image for Enhanced Search Capabilities - Zilliz blog: Milvus enables hybrid sparse and dense vector search and multi-vector search capabilities, simplifying the vectorization and search process.

- The guide to all-MiniLM-L12-v2 | Hugging Face: all-MiniLM-L12-v2: a text embedding model ideal for semantic search and RAG and fine-tuned based on Microsoft/MiniLM-L12-H384-uncased

- An LLM Powered Text to Image Prompt Generation with Milvus - Zilliz blog: An interesting LLM project powered by the Milvus vector database for generating more efficient text-to-image prompts.

- Build a Multimodal Search System with Milvus - Zilliz blog: Implementing a Multimodal Similarity Search System Using Milvus, Radient, ImageBind, and Meta-Chameleon-7b

- Enhancing Efficiency in Vector Searches with Binary Quantization and Milvus - Zilliz blog: Binary quantization represents a transformative approach to managing and searching vector data within Milvus, offering significant enhancements in both performance and efficiency. By simplifying vector representations into binary codes, this method leverages the speed of bitwise operations, substantially accelerating search operations and reducing computational overhead.

- Image Embeddings for Enhanced Image Search - Zilliz blog: Image Embeddings are the core of modern computer vision algorithms. Understand their implementation and use cases and explore different image embedding models.

|

| 21 Commits | ||

|---|---|---|---|

configs

configs

|

4 years ago | ||

models

models

|

4 years ago | ||

.gitattributes

.gitattributes

|

1.1 KiB

|

4 years ago | |

README.md

README.md

|

7.7 KiB

|

2 years ago | |

__init__.py

__init__.py

|

771 B

|

3 years ago | |

blip.py

blip.py

|

12 KiB

|

3 years ago | |

requirements.txt

requirements.txt

|

99 B

|

3 years ago | |

tabular1.png

tabular1.png

|

176 KiB

|

4 years ago | |

tabular2.png

tabular2.png

|

22 KiB

|

4 years ago | |

train_blip_with_hf_trainer.py

train_blip_with_hf_trainer.py

|

16 KiB

|

3 years ago | |

vec1.png

vec1.png

|

12 KiB

|

4 years ago | |

vec2.png

vec2.png

|

12 KiB

|

4 years ago | |