copied

Readme

Files and versions

4.4 KiB

Image-Text Retrieval Embdding with LightningDOT

author: David Wang

Description

This operator extracts features for image or text with LightningDOT which can generate embeddings for text and image by jointly training an image encoder and text encoder to maximize the cosine similarity.

Code Example

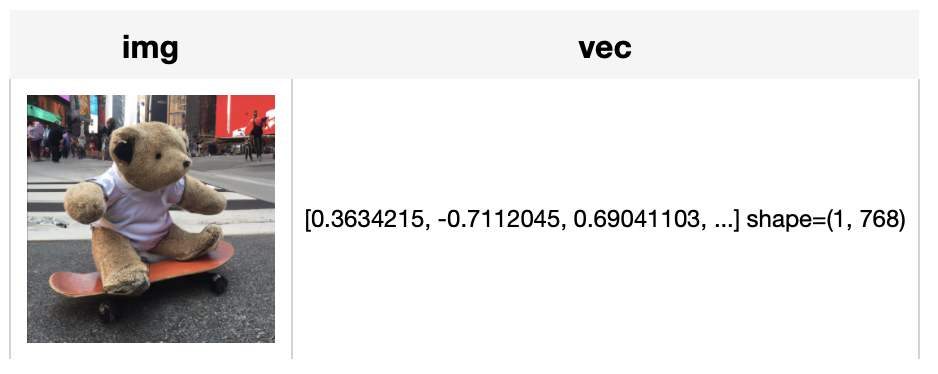

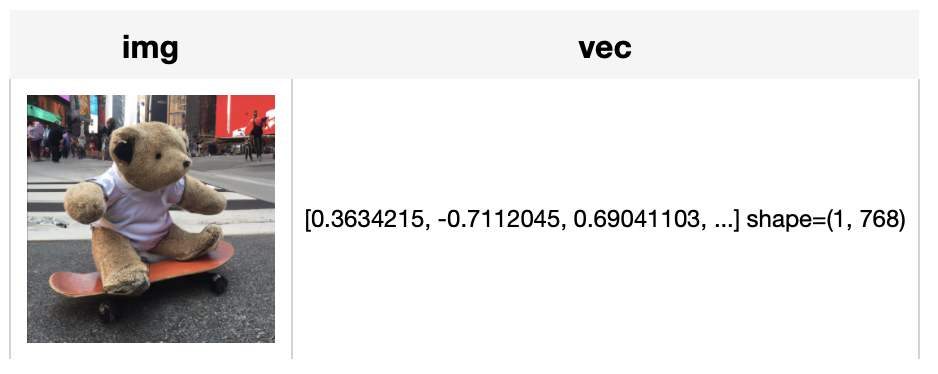

Load an image from path './teddy.jpg' to generate an image embedding.

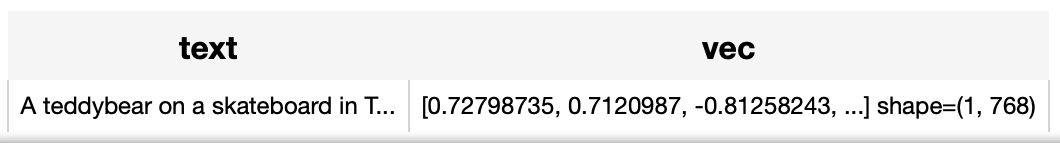

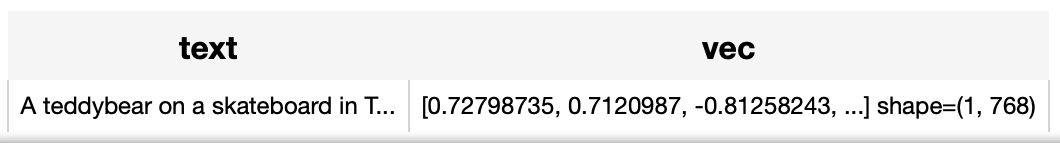

Read the text 'A teddybear on a skateboard in Times Square.' to generate an text embedding.

Write a pipeline with explicit inputs/outputs name specifications:

from towhee import pipe, ops, DataCollection

img_pipe = (

pipe.input('url')

.map('url', 'img', ops.image_decode.cv2_rgb())

.map('img', 'vec', ops.image_text_embedding.lightningdot(model_name='lightningdot_base', modality='image'))

.output('img', 'vec')

)

text_pipe = (

pipe.input('text')

.map('text', 'vec', ops.image_text_embedding.lightningdot(model_name='lightningdot_base', modality='text'))

.output('text', 'vec')

)

DataCollection(img_pipe('./teddy.jpg')).show()

DataCollection(text_pipe('A teddybear on a skateboard in Times Square.')).show()

Factory Constructor

Create the operator via the following factory method

lightningdot(model_name, modality)

Parameters:

model_name: str

The model name of LightningDOT. Supported model names:

- lightningdot_base

- lightningdot_coco_ft

- lightningdot_flickr_ft

modality: str

Which modality(image or text) is used to generate the embedding.

Interface

An image-text embedding operator takes a towhee image or string as input and generate an embedding in ndarray.

Parameters:

data: towhee.types.Image (a sub-class of numpy.ndarray) or str

The data (image or text based on specified modality) to generate embedding.

Returns: numpy.ndarray

The data embedding extracted by model.

More Resources

- Sparse and Dense Embeddings - Zilliz blog: Learn about sparse and dense embeddings, their use cases, and a text classification example using these embeddings.

- Building A Trademark Image Search System with Milvus - Zilliz blog: Learn how to use a vector database to build your own trademark image similarity search system that could save you from intellectual property lawsuits.

- Training Your Own Text Embedding Model - Zilliz blog: Explore how to train your text embedding model using the

sentence-transformerslibrary and generate our training data by leveraging a pre-trained LLM. - Supercharged Semantic Similarity Search in Production - Zilliz blog: Building a Blazing Fast, Highly Scalable Text-to-Image Search with CLIP embeddings and Milvus, the most advanced open-source vector database.

- An LLM Powered Text to Image Prompt Generation with Milvus - Zilliz blog: An interesting LLM project powered by the Milvus vector database for generating more efficient text-to-image prompts.

- Hybrid Search: Combining Text and Image for Enhanced Search Capabilities - Zilliz blog: Milvus enables hybrid sparse and dense vector search and multi-vector search capabilities, simplifying the vectorization and search process.

- Build a Multimodal Search System with Milvus - Zilliz blog: Implementing a Multimodal Similarity Search System Using Milvus, Radient, ImageBind, and Meta-Chameleon-7b

- Image Embeddings for Enhanced Image Search - Zilliz blog: Image Embeddings are the core of modern computer vision algorithms. Understand their implementation and use cases and explore different image embedding models.

4.4 KiB

Image-Text Retrieval Embdding with LightningDOT

author: David Wang

Description

This operator extracts features for image or text with LightningDOT which can generate embeddings for text and image by jointly training an image encoder and text encoder to maximize the cosine similarity.

Code Example

Load an image from path './teddy.jpg' to generate an image embedding.

Read the text 'A teddybear on a skateboard in Times Square.' to generate an text embedding.

Write a pipeline with explicit inputs/outputs name specifications:

from towhee import pipe, ops, DataCollection

img_pipe = (

pipe.input('url')

.map('url', 'img', ops.image_decode.cv2_rgb())

.map('img', 'vec', ops.image_text_embedding.lightningdot(model_name='lightningdot_base', modality='image'))

.output('img', 'vec')

)

text_pipe = (

pipe.input('text')

.map('text', 'vec', ops.image_text_embedding.lightningdot(model_name='lightningdot_base', modality='text'))

.output('text', 'vec')

)

DataCollection(img_pipe('./teddy.jpg')).show()

DataCollection(text_pipe('A teddybear on a skateboard in Times Square.')).show()

Factory Constructor

Create the operator via the following factory method

lightningdot(model_name, modality)

Parameters:

model_name: str

The model name of LightningDOT. Supported model names:

- lightningdot_base

- lightningdot_coco_ft

- lightningdot_flickr_ft

modality: str

Which modality(image or text) is used to generate the embedding.

Interface

An image-text embedding operator takes a towhee image or string as input and generate an embedding in ndarray.

Parameters:

data: towhee.types.Image (a sub-class of numpy.ndarray) or str

The data (image or text based on specified modality) to generate embedding.

Returns: numpy.ndarray

The data embedding extracted by model.

More Resources

- Sparse and Dense Embeddings - Zilliz blog: Learn about sparse and dense embeddings, their use cases, and a text classification example using these embeddings.

- Building A Trademark Image Search System with Milvus - Zilliz blog: Learn how to use a vector database to build your own trademark image similarity search system that could save you from intellectual property lawsuits.

- Training Your Own Text Embedding Model - Zilliz blog: Explore how to train your text embedding model using the

sentence-transformerslibrary and generate our training data by leveraging a pre-trained LLM. - Supercharged Semantic Similarity Search in Production - Zilliz blog: Building a Blazing Fast, Highly Scalable Text-to-Image Search with CLIP embeddings and Milvus, the most advanced open-source vector database.

- An LLM Powered Text to Image Prompt Generation with Milvus - Zilliz blog: An interesting LLM project powered by the Milvus vector database for generating more efficient text-to-image prompts.

- Hybrid Search: Combining Text and Image for Enhanced Search Capabilities - Zilliz blog: Milvus enables hybrid sparse and dense vector search and multi-vector search capabilities, simplifying the vectorization and search process.

- Build a Multimodal Search System with Milvus - Zilliz blog: Implementing a Multimodal Similarity Search System Using Milvus, Radient, ImageBind, and Meta-Chameleon-7b

- Image Embeddings for Enhanced Image Search - Zilliz blog: Image Embeddings are the core of modern computer vision algorithms. Understand their implementation and use cases and explore different image embedding models.