copied

Readme

Files and versions

Updated 2 years ago

image-text-embedding

Russian Image-Text Retrieval Embdding with CLIP

author: David Wang

Description

This operator extracts features for image or text with CLIP which can generate embeddings for text and image by jointly training an image encoder and text encoder to maximize the cosine similarity. This is a Russian version of CLIP adopted from ai-forever/ru-clip.

Code Example

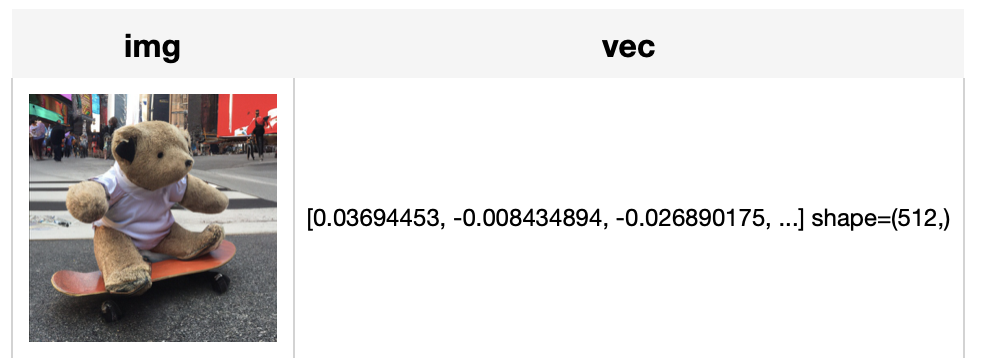

Load an image from path './teddy.jpg' to generate an image embedding.

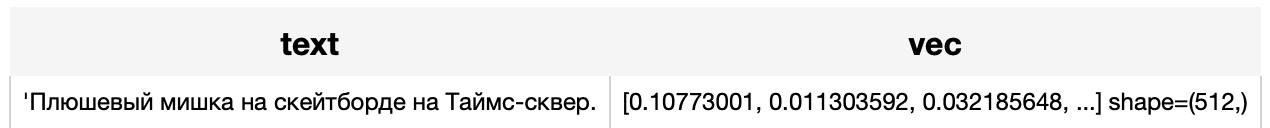

Read the text 'Плюшевый мишка на скейтборде на Таймс-сквер.' to generate an text embedding.

Write a pipeline with explicit inputs/outputs name specifications:

import towhee

from towhee import pipe, ops, DataCollection

img_pipe = (

pipe.input('url')

.map('url', 'img', ops.image_decode.cv2_rgb())

.map('img', 'vec', ops.image_text_embedding.ru_clip(model_name='ruclip-vit-base-patch32-224', modality='image'))

.output('img', 'vec')

)

text_pipe = (

pipe.input('text')

.map('text', 'vec', ops.image_text_embedding.ru_clip(model_name='ruclip-vit-base-patch32-224', modality='text'))

.output('text', 'vec')

)

DataCollection(img_pipe('./teddy.jpg')).show()

DataCollection(text_pipe('Плюшевый мишка на скейтборде на Таймс-сквер.')).show()

Factory Constructor

Create the operator via the following factory method

ru_clip(model_name, modality)

Parameters:

model_name: str

The model name of CLIP. Supported model names:

- ruclip-vit-base-patch32-224

- ruclip-vit-base-patch16-224

- ruclip-vit-large-patch14-224

- ruclip-vit-large-patch14-336

- ruclip-vit-base-patch32-384

- ruclip-vit-base-patch16-384

modality: str

Which modality(image or text) is used to generate the embedding.

Interface

An image-text embedding operator takes a towhee image or string as input and generate an embedding in ndarray.

Parameters:

data: towhee.types.Image (a sub-class of numpy.ndarray) or str

The data (image or text based on specified modality) to generate embedding.

Returns: numpy.ndarray

The data embedding extracted by model.

More Resources

- CLIP Object Detection: Merging AI Vision with Language Understanding - Zilliz blog: CLIP Object Detection combines CLIP's text-image understanding with object detection tasks, allowing CLIP to locate and identify objects in images using texts.

- Supercharged Semantic Similarity Search in Production - Zilliz blog: Building a Blazing Fast, Highly Scalable Text-to-Image Search with CLIP embeddings and Milvus, the most advanced open-source vector database.

- The guide to clip-vit-base-patch32 | OpenAI: clip-vit-base-patch32: a CLIP multimodal model variant by OpenAI for image and text embedding.

- Exploring OpenAI CLIP: The Future of Multi-Modal AI Learning - Zilliz blog: Multimodal AI learning can get input and understand information from various modalities like text, images, and audio together, leading to a deeper understanding of the world. Learn more about OpenAI's CLIP (Contrastive Language-Image Pre-training), a popular multimodal model for text and image data.

- Image Embeddings for Enhanced Image Search - Zilliz blog: Image Embeddings are the core of modern computer vision algorithms. Understand their implementation and use cases and explore different image embedding models.

- From Text to Image: Fundamentals of CLIP - Zilliz blog: Search algorithms rely on semantic similarity to retrieve the most relevant results. With the CLIP model, the semantics of texts and images can be connected in a high-dimensional vector space. Read this simple introduction to see how CLIP can help you build a powerful text-to-image service.

|

| 8 Commits | ||

|---|---|---|---|

ruclip

ruclip

|

4 years ago | ||

.gitattributes

.gitattributes

|

1.1 KiB

|

4 years ago | |

README.md

README.md

|

4.4 KiB

|

2 years ago | |

__init__.py

__init__.py

|

708 B

|

4 years ago | |

requirements.txt

requirements.txt

|

39 B

|

4 years ago | |

ru_clip.py

ru_clip.py

|

2.6 KiB

|

4 years ago | |

tabular1.png

tabular1.png

|

179 KiB

|

4 years ago | |

tabular2.png

tabular2.png

|

24 KiB

|

4 years ago | |

vec1.png

vec1.png

|

14 KiB

|

4 years ago | |

vec2.png

vec2.png

|

13 KiB

|

4 years ago | |