copied

Readme

Files and versions

Updated 2 years ago

img2img-translation

Animating using AnimeGanV2

author: Filip Haltmayer

Description

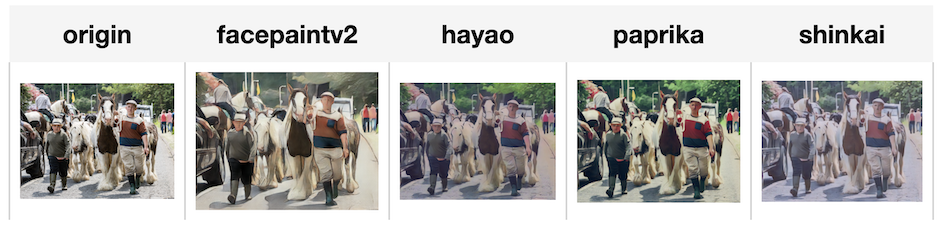

Convert an image into an animated image using AnimeganV2.

Code Example

Load an image from path './test.png'.

Write the pipeline in simplified style:

import towhee

towhee.glob('./test.png') \

.image_decode() \

.img2img_translation.animegan(model_name = 'hayao') \

.show()

Write a pipeline with explicit inputs/outputs name specifications:

import towhee

towhee.glob['path']('./test.png') \

.image_decode['path', 'origin']() \

.img2img_translation.animegan['origin', 'facepaintv2'](model_name = 'facepaintv2') \

.img2img_translation.animegan['origin', 'hayao'](model_name = 'hayao') \

.img2img_translation.animegan['origin', 'paprika'](model_name = 'paprika') \

.img2img_translation.animegan['origin', 'shinkai'](model_name = 'shinkai') \

.select['origin', 'facepaintv2', 'hayao', 'paprika', 'shinkai']() \

.show()

Factory Constructor

Create the operator via the following factory method

img2img_translation.animegan(model_name = 'which anime model to use')

Model options:

- celeba

- facepaintv1

- facepaintv2

- hayao

- paprika

- shinkai

Interface

Takes in a numpy rgb image in channels first. It transforms input into animated image in numpy form.

Parameters:

model_name: str

Which model to use for transfer.

framework: str

Which ML framework being used, for now only supports PyTorch.

device: str

Which device being used('cpu' or 'cuda'), defaults to 'cpu'.

Returns: towhee.types.Image (a sub-class of numpy.ndarray)

The new image.

Reference

Jie Chen, Gang Liu, Xin Chen "AnimeGAN: A Novel Lightweight GAN for Photo Animation." ISICA 2019: Artificial Intelligence Algorithms and Applications pp 242-256, 2019.

More Resources

- What is a Generative Adversarial Network? An Easy Guide: Just like we classify animal fossils into domains, kingdoms, and phyla, we classify AI networks, too. At the highest level, we classify AI networks as "discriminative" and "generative." A generative neural network is an AI that creates something new. This differs from a discriminative network, which classifies something that already exists into particular buckets. Kind of like we're doing right now, by bucketing generative adversarial networks (GANs) into appropriate classifications. So, if you were in a situation where you wanted to use textual tags to create a new visual image, like with Midjourney, you'd use a generative network. However, if you had a giant pile of data that you needed to classify and tag, you'd use a discriminative model.

- Multimodal RAG locally with CLIP and Llama3 - Zilliz blog: A tutorial walks you through how to build a multimodal RAG with CLIP, Llama3, and Milvus.

- Generative AI for Creative Applications using Storia Lab - Zilliz blog: This post discusses how Storia AI generates and edits images through simple text prompts or clicks and how we can leverage Storia AI and Milvus to build multimodal RAG.

- Image Embeddings for Enhanced Image Search - Zilliz blog: Image Embeddings are the core of modern computer vision algorithms. Understand their implementation and use cases and explore different image embedding models.

- Real-Time GenAI without Hallucination Using Confluent & Zilliz Cloud: nan

|

| 17 Commits | ||

|---|---|---|---|

pytorch

pytorch

|

4 years ago | ||

.gitattributes

.gitattributes

|

1.1 KiB

|

4 years ago | |

README.md

README.md

|

3.9 KiB

|

2 years ago | |

__init__.py

__init__.py

|

76 B

|

4 years ago | |

animegan.py

animegan.py

|

1.3 KiB

|

3 years ago | |

requirements.txt

requirements.txt

|

46 B

|

4 years ago | |

results1.png

results1.png

|

73 KiB

|

4 years ago | |

results2.png

results2.png

|

282 KiB

|

4 years ago | |

test.png

test.png

|

300 KiB

|

4 years ago | |