copied

Readme

Files and versions

Updated 2 years ago

text-embedding

Text Embedding with DPR

author: Kyle He

Description

This operator uses Dense Passage Retrieval (DPR) to convert long text to embeddings.

Dense Passage Retrieval (DPR) is a set of tools and models for state-of-the-art open-domain Q&A research. It was introduced in Dense Passage Retrieval for Open-Domain Question Answering by Vladimir Karpukhin, Barlas Oğuz, Sewon Min, Patrick Lewis, Ledell Wu, Sergey Edunov, Danqi Chen, Wen-tau Yih[1].

DPR models were proposed in "Dense Passage Retrieval for Open-Domain Question Answering"[2].

In this work, they show that retrieval can be practically implemented using dense representations alone, where embeddings are learned from a small number of questions and passages by a simple dual-encoder framework[2].

References

[1].https://huggingface.co/docs/transformers/model_doc/dpr

[2].https://arxiv.org/abs/2004.04906

Code Example

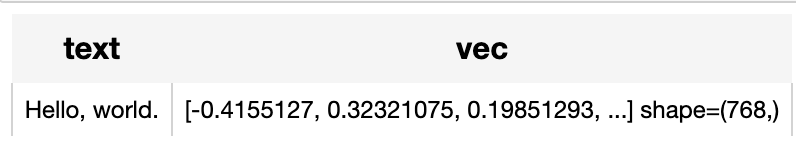

Use the pre-trained model "facebook/dpr-ctx_encoder-single-nq-base" to generate a text embedding for the sentence "Hello, world.".

Write the pipeline:

from towhee import pipe, ops, DataCollection

p = (

pipe.input('text')

.map('text', 'vec', ops.text_embedding.dpr(model_name='facebook/dpr-ctx_encoder-single-nq-base'))

.output('text', 'vec')

)

DataCollection(p('Hello, world.')).show()

Factory Constructor

Create the operator via the following factory method:

text_embedding.dpr(model_name="facebook/dpr-ctx_encoder-single-nq-base")

Parameters:

model_name: str

The model name in string. The default value is "facebook/dpr-ctx_encoder-single-nq-base".

Supported model names:

- facebook/dpr-ctx_encoder-single-nq-base

- facebook/dpr-ctx_encoder-multiset-base

Interface

The operator takes a text in string as input. It loads tokenizer and pre-trained model using model name and then return text embedding in ndarray.

Parameters:

text: str

The text in string.

Returns:

numpy.ndarray

The text embedding extracted by model.

More Resources

- The guide to text-embedding-ada-002 model | OpenAI: text-embedding-ada-002: OpenAI's legacy text embedding model; average price/performance compared to text-embedding-3-large and text-embedding-3-small.

- Sentence Transformers for Long-Form Text - Zilliz blog: Deep diving into modern transformer-based embeddings for long-form text.

- Building Open Source Chatbots with LangChain and Milvus in 5m - Zilliz blog: A start-to-finish tutorial for RAG retrieval and question-answering chatbot on custom documents using Milvus, LangChain, and an open-source LLM.

- Tutorial: Diving into Text Embedding Models | Zilliz Webinar: Register for a free webinar diving into text embedding models in a presentation and tutorial

- Tutorial: Diving into Text Embedding Models | Zilliz Webinar: Register for a free webinar diving into text embedding models in a presentation and tutorial

- The guide to text-embedding-3-small | OpenAI: text-embedding-3-small: OpenAIâs small text embedding model optimized for accuracy and efficiency with a lower cost.

- Evaluating Your Embedding Model - Zilliz blog: Review some practical examples to evaluate different text embedding models.

- The guide to voyage-large-2 | Voyage AI: voyage-large-2: general-purpose text embedding model; optimized for retrieval quality; ideal for tasks like summarization, clustering, and classification.

|

| 22 Commits | ||

|---|---|---|---|

.gitattributes

.gitattributes

|

1.1 KiB

|

4 years ago | |

README.md

README.md

|

3.9 KiB

|

2 years ago | |

__init__.py

__init__.py

|

660 B

|

4 years ago | |

dpr.py

dpr.py

|

2.2 KiB

|

3 years ago | |

requirements.txt

requirements.txt

|

55 B

|

3 years ago | |

result.png

result.png

|

5.6 KiB

|

3 years ago | |