copied

Readme

Files and versions

Updated 2 years ago

video-text-embedding

Video-Text Retrieval Embedding with Frozen In Time

author: Jinling Xu

Description

This operator extracts features for video or text with Frozen In Time which can generate embeddings for text and video by jointly training a video encoder and text encoder to maximize the cosine similarity.

Code Example

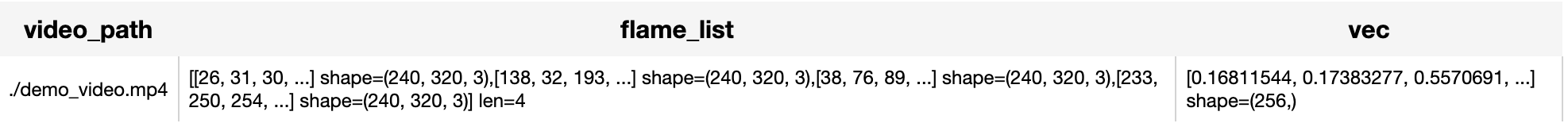

Load a video from path './demo_video.mp4' to generate a video embedding.

- Encode video (default):

from towhee import pipe, ops, DataCollection

p = (

pipe.input('video_path') \

.map('video_path', 'flame_gen', ops.video_decode.ffmpeg(sample_type='uniform_temporal_subsample', args={'num_samples': 4})) \

.map('flame_gen', 'flame_list', lambda x: [y for y in x]) \

.map('flame_list', 'vec', ops.video_text_embedding.frozen_in_time(model_name='frozen_in_time_base_16_244', modality='video', device='cpu')) \

.output('video_path', 'flame_list', 'vec')

)

DataCollection(p('./demo_video.mp4')).show()

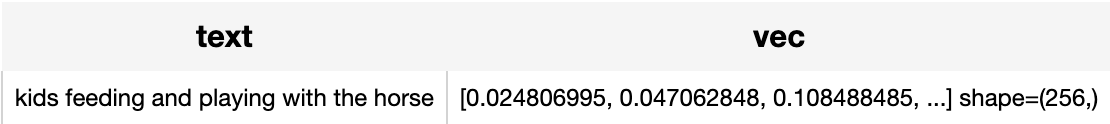

Read the text 'kids feeding and playing with the horse' to generate a text embedding.

- Encode text:

from towhee import pipe, ops, DataCollection

p = (

pipe.input('text') \

.map('text', 'vec', ops.video_text_embedding.frozen_in_time(model_name='frozen_in_time_base_16_244', modality='text', device='cpu')) \

.output('text', 'vec')

)

DataCollection(p('kids feeding and playing with the horse')).show()

Factory Constructor

Create the operator via the following factory method

frozen_in_time(model_name, modality, weight_path)

Parameters:

model_name: str

The model name of frozen in time. Supported model names:

- frozen_in_time_base_16_244

modality: str

Which modality(video or text) is used to generate the embedding.

weight_path: str

pretrained model weights path.

Interface

An video-text embedding operator takes a list of Towhee VideoFrame or string as input and generate an embedding in ndarray.

Parameters:

data: List[towhee.types.Image] or str

The data (list of Towhee VideoFrame (which is uniform subsampled from a video) or text based on specified modality) to generate embedding.

Returns: numpy.ndarray

The data embedding extracted by model.

More Resources

- What is BERT (Bidirectional Encoder Representations from Transformers)? - Zilliz blog: Learn what Bidirectional Encoder Representations from Transformers (BERT) is and how it uses pre-training and fine-tuning to achieve its remarkable performance.

- The guide to mistral-embed | Mistral AI: mistral-embed: a specialized embedding model for text data with a context window of 8,000 tokens. Optimized for similarity retrieval and RAG applications.

- Supercharged Semantic Similarity Search in Production - Zilliz blog: Building a Blazing Fast, Highly Scalable Text-to-Image Search with CLIP embeddings and Milvus, the most advanced open-source vector database.

- Sparse and Dense Embeddings: A Guide for Effective Information Retrieval with Milvus | Zilliz Webinar: Zilliz webinar covering what sparse and dense embeddings are and when you'd want to use one over the other.

- Tutorial: Diving into Text Embedding Models | Zilliz Webinar: Register for a free webinar diving into text embedding models in a presentation and tutorial

- Tutorial: Diving into Text Embedding Models | Zilliz Webinar: Register for a free webinar diving into text embedding models in a presentation and tutorial

- The guide to all-MiniLM-L12-v2 | Hugging Face: all-MiniLM-L12-v2: a text embedding model ideal for semantic search and RAG and fine-tuned based on Microsoft/MiniLM-L12-H384-uncased

- Sparse and Dense Embeddings: A Guide for Effective Information Retrieval with Milvus | Zilliz Webinar: Zilliz webinar covering what sparse and dense embeddings are and when you'd want to use one over the other.

- Enhancing Information Retrieval with Sparse Embeddings | Zilliz Learn - Zilliz blog: Explore the inner workings, advantages, and practical applications of learned sparse embeddings with the Milvus vector database

|

| 28 Commits | ||

|---|---|---|---|

.gitattributes

.gitattributes

|

1.1 KiB

|

4 years ago | |

README.md

README.md

|

4.8 KiB

|

2 years ago | |

__init__.py

__init__.py

|

755 B

|

4 years ago | |

demo_video.mp4

demo_video.mp4

|

950 KiB

|

4 years ago | |

frozen_in_time.py

frozen_in_time.py

|

4.8 KiB

|

4 years ago | |

frozen_in_time_base_16_224.pth

frozen_in_time_base_16_224.pth

|

690 MiB

|

4 years ago | |

parse_config.py

parse_config.py

|

7.0 KiB

|

4 years ago | |

requirements.txt

requirements.txt

|

101 B

|

4 years ago | |

text_emb_result.png

text_emb_result.png

|

14 KiB

|

3 years ago | |

video_emb_result.png

video_emb_result.png

|

30 KiB

|

3 years ago | |